Subsequently, We create a Swarm Cluster and trigger one by one airflow services on it. We translate the docker compose file into the docker service command. In the beginning, we learn all the swarm concepts, architecture, commands and networking. Last module is on docker swarm and we witness how easy it is to setup the entire airflow just by running a few swarm commands.

In the second module, we investigate Airflow 2.0 and understand the additional advantage over airflow 1.x. We discover the airflow HA architecture and discuss each system requirement.Īfter this, we acquire these machines from AWS and start containerising one by one applications using docker compose.Īt the end, we run multiple airflow schedulers and benchmark it.

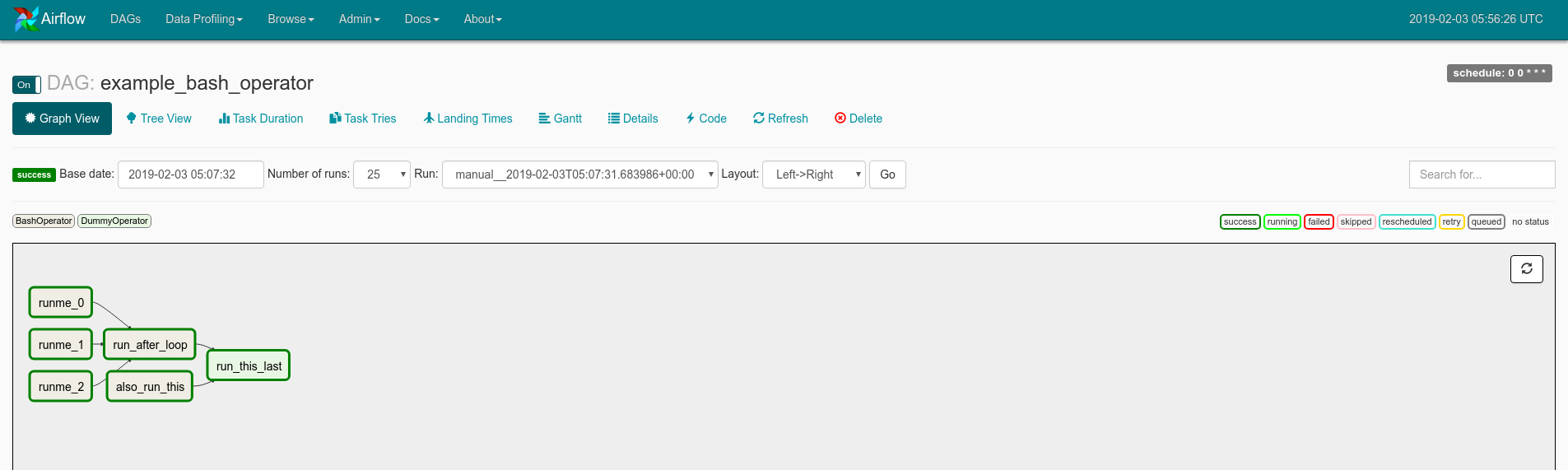

I cover all docker necessary concepts which are used in this course. Don't worry if you have no prior experience on docker. The primary objective of this course is to achieve resilient airflow using the Docker and Docker Swarm. I am using the latest stable airflow (2.0.1) throughout this course.Īt first, we study all the required docker concepts. scripts: We have a file called airflow-entrypoint.sh in which we will place the commands that we want to execute when the airflow container starts.env is the file that we will use to supply environment variables. So that others don't need to struggle like mine. dags: is an important folder, every dag definition that you place under dags directory is picked up by scheduler. I consolidated all my learnings, knowledge into this course. Overall, I contributed many sleepless nights to achieve fault tolerant, resilient, distributed, Highly Available airflow using Docker Swarm. Also, there is no docker image available on Docker registry to start airflow through Docker Swarm. But in production, we setup each component on different machines. The large driver and bass duct help emit deep bass with air flow that lets. The template enables Apache Airflow logs in CloudWatch at the "INFO" level and up for the Airflow scheduler log group, Airflow web server log group, Airflow worker log group, Airflow DAG processing log group, and the Airflow task log group, as defined in Viewing Airflow logs in Amazon CloudWatch.When I started configuring airflow in my organisation, I spent many weeks on writing Docker Compose files for each airflow component.Īirflow community provides a single docker compose file which installs all the components in a single machine. Manuals & Software Interactive Simulators Video Tutorial Community. The template creates an Amazon MWAA environment that's associated to the dags folder on the Amazon S3 bucket, an execution role with permission to AWS services used by Amazon MWAA, and the default for encryption using an AWS owned key, as defined in Create an Amazon MWAA environment.ĬloudWatch Logs. those sites for all the info that I mixed and matched to create this tutorial. It's configured to Block all public access, with Bucket Versioning enabled, as defined in Create an Amazon S3 bucket for Amazon MWAA.Īmazon MWAA environment. While the 360 has dual exhaust fans, most of the airflow goes to the CPU. Step 1: Let’s open vs code, create a project name on the desktop directory, and open it, I am going to name it airflowdocker Step 2: Install the Docker Desktop application on your machine and launch it until you see the green running sign from the menu bar. The template creates an Amazon S3 bucket with a dags folder. It uses the Public network access mode for the Apache Airflow Web server in WebserverAccessMode: PUBLIC_ONLY.Īmazon S3 bucket. The template uses Public routing over the Internet.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed